I MIGHT upgrade to Skylake-E when it drops, depending on what there is of interest in it. We are now on Skylake and there is pretty much zero chance I'll upgrade to Skylake. I then got an Ivy i5-3750 right after Ivy hit. Then I went from a late model P4 to a Core 2 Duo.and I didn't upgrade for 4 years (granted, I upgraded to one generation back when I got the Core 2). There was a time, from about age 13 till my mid 20's where I was upgrading my computer about every year in some way, and the CPU was certainly getting replaced at least every 3 years and usually every 2. Kind of how the opportunities for parallelism in pure functional languages haven't (yet) been enough to make them outperform old fashioned serial, imperative mutate-in-place code running on ordinary desktops. Even if you go for enthusiast edition parts you don't have enough cores in one machine to reach that crossover point where code designed for massive parallelism is net-beneficial in terms of wall clock time.

That's why I don't think that HPC scaling improvements are naturally going to trickle down to benefit users of Intel's latest multicore chips.

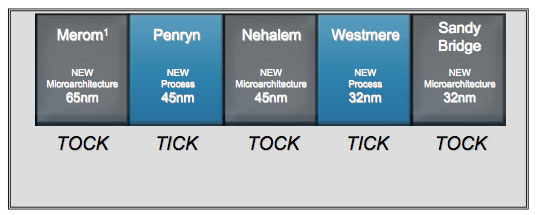

It's just too hard to do both ends of the scale well. So far nobody seems to offer software of this kind that runs great on a single machine and still runs great on a 2000 node cluster. But as somebody who no longer has access to enormously parallel HPC facilities, and who communicates with other researchers who never had HPC big iron, I really notice the compromises that come with prioritizing performance at high scale over performance at small scale. I use NWChem frequently and I've even had some of my patches accepted by its developers. It's currently the most capable of the open source quantum chemistry programs only Psi4 is comparable. I started using NWChem in 2003, on DOE HPC facilities and on smaller machines, and the inconveniences and broken features on smaller machines have become worse over time. That's good! But users who have just a single dual-socket server (or less) to run calculations on encounter slower code and more problems. So users of leadership-class computing facilities get better software and the hardware gets more justifiable. Yes, you'll see improvements in new NWChem releases to enable scaling to even larger machines. Until then, buying new CPUs seems to only be for people building new systems. I'll go out and buy one of those fucking Xeon Phi CPUs the instant I hear Intel has figured out a way to force poorly written code to run across multiple threads. Wouldn't it have been great if programmers had started writing everything to make use of multiple cores.you know, ever? It seems like if you want to actually get full use out of a processor, you have to be running some sort of gaming or rendering or.benchmark.Įverything else is content to lag out and choke as soon as the one little thread it feels like running in is exhausted. I don't foresee a need to upgrade any time soon on account of CPU. Hell, the 2600k used to run at 4.8, but the damned thing is 5 years old, so it gets a pass on a bit of power loss. They're both running at the exact same stable clock speed of 4.4GHz. My "Devil's Canyon" 4790k doesn't perform any better than my "Sandy Bridge" 2600k. Why you're getting downvoted to hell is beyond me.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed